Recently (around 14 December 2022), Apple’s Machine Learning Research team published “Stable Diffusion with Core ML on Apple Silicon” with Python and Swift source code optimized for Apple Silicon (M1/M2) on Github apple/ml-stable-diffusion. Here I’m trying it out on a MacBook (though the code also works on iPhones and iPads)...

This is part 7 (ish) in what is turning out to be a series:

- (16 Sep 22): AI-generated images with Stable Diffusion on an M1 mac a.k.a.

txt2img- (17 Sep 22): Stable Diffusion image-to-image mode a.k.a.

img2img- (21 Sep 22): My simplified Stable Diffusion Python script a.k.a

txtimg2img- (26 Sep 22): Stable Diffusion script with inpainting mask a.k.a.

mask+txtimg2img- (28 Sep 22): Adding CLIPSeg automatic masking to Stable Diffusion a.k.a.

txt2mask+txtimg2img- (9 Oct 22): the original version of my code, myByways Simple-SD v1.0 Python script

- (18 Dec 22): this post Fast Stable Diffusion using Core ML on M1

(29 Jul 23): Ignore all the above as being outdated - jump to Stable Diffusion SDXL 1.0 with ComfyUI for Stable Diffusion SDXL 1.0 instead.

Installation

First, the installation pre-requisites - a folder with the Python code and a virtual environment with dependencies installed:

git clone https://github.com/apple/ml-stable-diffusion

cd ml-stable-diffusion

mkdir models outputs

python3 -m pip install virtualenv

python3 -m virtualenv v2

source v2/bin/activate

pip install -e .

pip install --upgrade torch==1.12.1

pip install --upgrade numpy==1.23.1I downgraded two modules:

- PyTorch 1.12.1 - to resolve this warning message,

Torch version 1.13.1 has not been tested with coremltools. You may run into unexpected errors. Torch 1.12.1 is the most recent version that has been tested. - Numpy 1.23.1 - to resolve this error message,

AttributeError: module 'numpy' has no attribute 'bool'. Did you mean: 'bool_'?

Model Conversion

Next, converting the models to Core ML - this step also downloads the models, which requires a HuggingFace.co login with a User Access Token. Currently, the code supports v1.4 and v1.5, each of which is about ~5.5GB total across 15-16 files.

huggingface-cli login

python -m python_coreml_stable_diffusion.torch2coreml --convert-unet --convert-text-encoder --convert-vae-decoder --convert-safety-checker -o models --bundle-resources-for-swift-cli

python -m python_coreml_stable_diffusion.torch2coreml --convert-unet --convert-text-encoder --convert-vae-decoder --convert-safety-checker -o models --model-version 'runwayml/stable-diffusion-v1-5'After some waiting... the converted Core ML models are availabe in the models folder. For each version, the converted models are stored in four folders, totaling ~2.5 GB per version:

- Stable_Diffusion_version_CompVis_stable-diffusion-v1-4_safety_checker.mlpackage / Stable_Diffusion_version_runwayml_stable-diffusion-v1-5_safety_checker.mlpackage

- Stable_Diffusion_version_CompVis_stable-diffusion-v1-4_text_encoder.mlpackage / Stable_Diffusion_version_runwayml_stable-diffusion-v1-5_text_encoder.mlpackage

- Stable_Diffusion_version_CompVis_stable-diffusion-v1-4_unet.mlpackage / Stable_Diffusion_version_runwayml_stable-diffusion-v1-5_unet.mlpackage

- Stable_Diffusion_version_CompVis_stable-diffusion-v1-4_vae_decoder.mlpackage / Stable_Diffusion_version_runwayml_stable-diffusion-v1-5_vae_decoder.mlpackage

Then, add --bundle-resources-for-swift-cli to generate a pre-compiled Resources bundle for Swift:

python -m python_coreml_stable_diffusion.torch2coreml --convert-unet --convert-text-encoder --convert-vae-decoder --convert-safety-checker -o models --bundle-resources-for-swift-cli

mv models/Resources models/Resources14

python -m python_coreml_stable_diffusion.torch2coreml --convert-unet --convert-text-encoder --convert-vae-decoder --convert-safety-checker -o models --model-version 'runwayml/stable-diffusion-v1-5' --bundle-resources-for-swift-cli

mv models/Resources models/Resources15The output always is placed in a Resources folder, so I rename it to avoid version collition. Each version is again to another ~2.5GB per version.

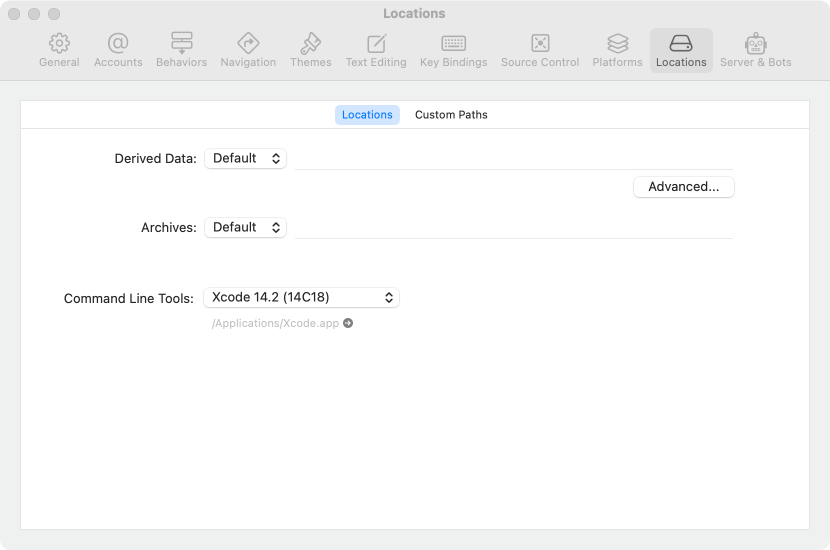

If you get an error xcrun: error: unable to find utility "coremlcompiler", not a developer tool or in PATH, and you are sure both XCode and XCode Command Line tools are installed (xcode-select --install), then maybe this will help: open XCode.app > Settings... > Locations, click the Command Line Tools folder to “reconnect” the command line tools. From the terminal, you should be able to run xcrun coremlcompiler.

Run Stable Diffusion in Python

At last, we can now try out the Apple-provided Python code to run Stable Diffusion. I notice it first loads the original PyTorch models, before replacing them with the .mlpackage Core ML models in compile-on-load mode. It sure takes a long time to run! For me, the run time is practically in the same ballpark as using the original Python model.

python -m python_coreml_stable_diffusion.pipeline --prompt "a photo of an astronaut riding a horse on mars" -i models -o outputsYou can also add TRANSFORMERS_OFFLINE=1 HF_DATASETS_OFFLINE=1 before running Python to use already downloaded models.

Run Stable Diffusion in Swift

However, Python does not support loading pre-compiled optimized models... so the final test is to run Apple’s Swift version which can load precompiled .mlmodelc models.

swift run StableDiffusionSample "a photo of an astronaut riding a horse on mars" --resource-path models/Resources14 --output-path outputs

swift run StableDiffusionSample "a photo of an astronaut riding a horse on mars" --resource-path models/Resources15 --output-path outputs --seed 123456For me, the Swift version is an incredible 4x times faster (though not the first time as code needs to be compiled). Stable Diffusion on M1 is now really, really FAST!